Speech recognition has developed at a furious pace in recent years, from being an annoying feature few people used because it never worked, to something that works most of the time. Provided that your native language is considered important by the companies developing the software, it’s likely that it can identify and transcribe most things you say.

But can it be used for language learning? More specifically, can the speech recognition function in your phone be used to learn Mandarin pronunciation?

At first glance, this might seem like a stupid question; if it can identify what a native speaker is saying and transcribe it accurately to Chinese characters, doesn’t that automatically mean that you can use it to verify your pronunciation by reading something in your textbook and see if what your phone thinks you’re saying is the same as what you intended to say?

Not necessarily!

Tune in to the Hacking Chinese Podcast to listen to the related episode:

Available on Apple Podcasts, Google Podcast, Overcast, Spotify and many other platforms!

Can speech recognition be used to learn Mandarin pronunciation?

There are two problems here.

- Speech recognition doesn’t necessarily work for what you want to practise. What ends up on your screen after you’ve said something is not the result of just analysing sound and transcribing it to text, it relies heavily on context and what you are likely to say, based on tons of user data (including your own). In general, therefore, we should expect speech recognition to do a good job with common sentences, but not be to optimistic about single-syllable words (no context) and weird sentences.

- Speech recognition risks being too lenient for language learning purposes. The goal of this type of software is to understand what you’re trying to say, regardless how you say it. This means that companies are making an effort to make sure you are understood even if you have a heavy accent, foreign or otherwise (see this article, for instance). This means that it could be too lenient for checking your pronunciation.

I will explore both these questions. In this the first part, I will discuss the first problem. The goal is to figure out how well speech recognition does in general, provided that the input is 100% correct.

In other words, this article is about identifying false negatives, i.e. when speech recognition tells us something is wrong, but it actually isn’t. To check this, I will use clearly enunciated Mandarin produced by a native teacher to see how well speech recognition does when it comes to transcribing single syllables, two-syllable words and phrases.

This article will answer this question:

If I say something and the speech recognition spits out something else, does that really mean that my pronunciation is bad, or could it be that the speech recognition is just not good enough?

Naturally, to fully answer this question, we also need to look at non-perfect but still acceptable pronunciation, which will be the focus of the next article.

In the second article, I will discuss the second problem, whether speech recognition is too lenient for language learning purposes. To achieve this, I will use student recordings to see how speech recognition deals with incorrectly pronounced syllables, words and sentences, looking for false positives. That article will answer the question:

If I say something and the speech recognition spits out exactly what I intended to say, does that really mean that my pronunciation is good, or could it be that the speech recognition is too lenient?

There is actually a third question here about using speech recognition in various apps for learning Chinese, some of which might be purpose-built, but I’ll leave that for a possible follow-up article later.

If the speech recognition gets it wrong, does that mean that your pronunciation is bad?

Let’s investigate how well speech recognition does, provided that the input is standardised Mandarin and clearly enunciated by a native teacher.

The audio used here was first recorded, then played back through loudspeakers to two phones: a OnePlus 6 running Android 9, and an iPhone 6 running iOS 12. The words and sentences come from my pronunciation course.

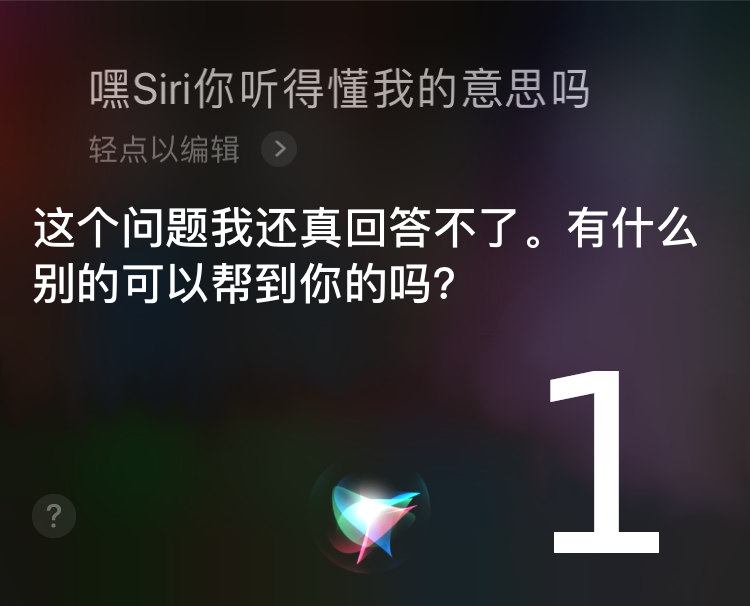

On Android, the dictation function was used, but on the iPhone, Siri was used (for some reason, the dictation function with Siri enabled just did not work at all). I did my best to reset voice data on both phones (i.e. switched accounts, cleared all voice data and so on). I also set Chinese to the only input language to avoid confusion between languages (沙 being recognised as the name Shaw for example).

Before we dive into the results, here’s a quick reminder of what we’re doing and why. The idea is that by using recordings we know are correct, we know that any instance of the speech recognition getting it wrong is a result of bad software, not bad pronunciation.

The results are split across monosyllabic words, disyllabic words and short phrases. For each, the teacher recordings are provided, along with what both phones thought the recordings said.

A) Monosyllabic words

Below, the results for single-syllable words are presented in the following way:

- The number of the item

- The utterance in Pinyin with attached audio (click to play)

- My judgement (not used in this article, but I want to maintain consistency with the follow-up article, where my comments about student pronunciation will be more important)

- My score: 0 means “this is likely to be perceived as the wrong syllable” and 3 means “very likely to be perceived as the right syllable”.

- Google’s guess: Please note that the software can’t know which specific character the speaker is reading, so any character with the same pronunciation is considered correct.

- Google’ score: One point is earned for each correct identification, out of three possible.

- Apple’s guess: Please note that the software can’t know which specific character the speaker is reading, so any character with the same pronunciation is considered correct.

- Apple’s’ score: One point is earned for each correct identification, out of three possible.

| Number | Teacher | Olle | Score | Score | Apple | Score | |

| 1 | bō | 波 | 3 | 播 | 3 | 拨 | 3 |

| 2 | fù | 父 | 3 | 附 | 3 | 付 | 3 |

| 3 | tí | 提 | 3 | 提 | 3 | 提 | 3 |

| 4 | zǒu | 走 | 3 | 走 | 3 | 走 | 3 |

| 5 | ěr | 耳 | 3 | 二二 | 0 | 嗯 | 1 |

| 6 | yǔ | 雨 | 3 | 语音 | 0 | 与 | 3 |

| 7 | zhì | 志 | 3 | 至 | 3 | 据 | 0 |

| 8 | shā | 沙 | 3 | 杀 | 3 | 沙 | 3 |

| 9 | cè | 策 | 3 | 测 | 3 | 测 | 3 |

| 10 | péi | 陪 | 3 | 培 | 3 | 陪 | 3 |

| Total | 100% | 80% | 83% |

On the whole, both did better on single-syllables than I thought! This was indeed one of the main reasons I wanted to test this, since I knew that sentences work pretty well already.

The main problem, which is confirmed by non-systematic testing of my own, are dipping third tones, which are often incorrectly parsed as two syllables. You can see this for 耳, which lead to 二二 on Android and 嗯二 on iOs. Same problem for yǔ on Android, but curiously not on iOS. I’m a bit surprised that iOS got 至 wrong, though since the vowel is quite different.

What this means for you as a learner

The above results mean that you can’t fully trust speech recognition to get single-syllable words correctly, even if they are clearly pronounced by a native teacher. This is particularly true for dipping third tones, but could affect other syllables as well. On the whole, though, clearly pronounced Mandarin syllables are correctly identified roughly 80% of the time.

In practice, this means that if your phone spits out something other than what you intended to say, you probably have a problem, unless it was a third tone in which case the software could be at fault. Of course, this doesn’t mean that you’re okay if it spits out what you intended to say, that question will be answered in the next article.

B) Disyllabic words

Now, let’s look at two-syllable words, the bread and butter of the Chinese language and something I often advise students to focus on when it comes to tones.

Scoring here works by adding one point for every attempt the software got it right out of a total of three attempts, so 3 is a perfect score.

| Number | Teacher | Olle | Score | Score | Apple | Score | |

| 11 | nǚrén | 女人 | 3 | 女人 | 3 | 女人 | 3 |

| 12 | ěrduo | 耳朵 | 3 | 耳朵 | 3 | 耳朵 | 3 |

| 13 | suíjī | 随机 | 3 | 随机 | 3 | 随机 | 3 |

| 14 | xiàngxià | 向下 | 3 | 向下 | 3 | 向下 | 2 |

| 15 | pínqióng | 贫穷 | 3 | 贫穷 | 3 | 贫穷 | 3 |

| 16 | lǎoshī | 老师 | 3 | 老师 | 3 | 老师 | 3 |

| 17 | qīngchu | 清楚 | 3 | 清楚 | 3 | 清楚 | 3 |

| 18 | liǎojiě | 了解 | 3 | 了解 | 3 | 了解 | 3 |

| 19 | rùnzé | 润泽 | 3 | 润泽 | 3 | 润泽 | 3 |

| 20 | bózi | 脖子 | 3 | 脖子 | 3 | 脖子 | 3 |

| Total | 100% | 100% | 97% |

Not surprisingly, both did better with two-syllable words than with single-syllable words, which makes sense based on what I said above about context. All these are also reasonably common dictionary entries and should be present in any database of Chinese vocabulary. I’m particularly impressed by the fact that both got 润泽 correctly, which is not, I assume, something people normally say to their phones (it means “glossy”).

What this means for you as a learner

The above results mean that if you pronounce words correctly and clearly, it’s very likely that your phone will be able to identify them correctly, even if the word is not straightforward or common. This means that if you say a word and your phone gets it wrong, you probably aren’t pronouncing it perfectly.

A friendly reminder: This doesn’t mean that if the speech recognition gets it right, your pronunciation is good. That will be the topic of the next article.

C) Phrases

Now we’re approaching the home territory of speech recognition software. It’s not normal for people to ambush their phones by suddenly saying strange things like “glossy”, but it is normal to dictate a sentence or ask a question. Let’s see if it works as well as it ought to!

Scoring here was done by deducting one point for every incorrectly identified character, with a maximum of three points as before.

| 21 | Tā shìbushì Wáng lǎoshī. | Score |

| Olle | 他是不是王老师 | 3 |

| 他是不是王老师 | 3 | |

| Apple | 他是不是王老师 | 3 |

| 22 | Máfan nǐ bǎ yán dì gěi wǒ. | Score |

| Olle | 麻烦你把盐递给我 | 3 |

| 麻烦你把盐递给我 | 3 | |

| Apple | 麻烦你把炎帝给我* | 2 |

| 23 | Wǒ yídìng yào qù Měiguó. | Score |

| Olle | 我一定要去美国 | 3 |

| 我一定要去美国 | 3 | |

| Apple | 我一定要去美国 | 3 |

| 24 | Qǐngwèn, wǒ kěyǐ jìnlai ma? | Score |

| Olle | 请问,我可以进来吗? | 3 |

| 请问,我可以进来吗? | 3 | |

| Apple | 请问,我可以进来吗? | 3 |

| 25 | Fángzū yígòng shì yìqiān wǔbǎi yīshí yuán. | Score |

| Olle | 房租一共是一千五百一十元 | 3 |

| 房租一共是一千五百一十元 | 3 | |

| Apple | 房租一共是一千五百一十元 | 3 |

- Human score: 100%

- Google’s score: 100%

- Apple’s score: 93%

As expected, both phones did a good job here, correctly transcribing everything on the syllable level (more about item 22 in a bit). Please note that the number of syllables tested here is much larger than for the previous tests, but no syllable-level error was made anyway. Again, because of context. Still impressive!

Item 22 is a bit puzzling, because while it is correct on the syllable level, it’s clearly not correct on the word level. For a human, this is obviously wrong, because 炎帝, “the Yan/Flame Emperor” is typically not something you ask someone to give you, whereas 盐, “salt”, is something you commonly pass along to other people.

If you listen to the recording, it’s quite clear that there is a gap between yán and dì, so I think this transcription should be judged as incorrect based purely on the auditory cues available. However, since it’s still technically right on the syllable level, I’ve just deducted one point.

What this means for you as a learner

All this means that as long as your sentences are fairly normal, you can expect perfect pronunciation to result in perfect transcriptions. There might be minor deviations and weird cases, but on the whole, speech recognition does a really good job here.

Friendly reminder (again): This doesn’t mean that a correct transcription means that your pronunciation is good; more about this in the next article.

Conclusion

The purpose of this article was to answer this question:

If I say something and the speech recognition spits out something else, does that really mean that my pronunciation is bad, or could it be that the speech recognition is wrong?

As we have seen, the accuracy here hovers close to 100% for two-syllable words and phrases, and around 80% for single-syllable words.

Thus, if it produces something other than what you intended to say, it’s likely that your pronunciation is not perfect. This is more true for sentences than it is for monosyllabic words, where the error rate is higher.

In the next article, I will use student recordings to check how speech recognition handles non-perfect input. The main goal will be to see how lenient speech recognition is, but also te see how it deals with non-native audio in general:

How good is voice recognition for learning Chinese pronunciation?

Caveats and more research

There are of course many caveats here. This is a blog post, not a serious research article (though it would make an excellent topic for one). To verify these results, everything would need to be standardised, all other variables kept constant, the number of speakers increased, the amount of language covered widened, and so on.

As some form of compensation, I tried all the words and sentences presented in this article myself, both with Android and iOS, and had results comparable to those presented in this article. It could be that there are teachers with excellent pronunciation who aren’t recognised properly, perhaps because they have very unusual voices or something, but I doubt it.

In general, good pronunciation will result in accurate transcriptions.

Further reading

I did some quick searches for previous studies regarding the reliability of speech recognition for language learning purposes, but they were either old or dealt with the problem on a much more abstract level. If you know of any other research, please let me know!

Mushangwe, H. (2014). Using voice recognition software in learning Chinese pronunciation. International Journal of Instructional Technology & Distance Learning, 11(3), 3-18.

Zinovjeva, N. (2005). Use of speech technology in learning to speak a foreign language. Speech Technology, 46(2), 47-83.

Tips and tricks for how to learn Chinese directly in your inbox

I've been learning and teaching Chinese for more than a decade. My goal is to help you find a way of learning that works for you. Sign up to my newsletter for a 7-day crash course in how to learn, as well as weekly ideas for how to improve your learning!